Introduction to Biostatistics 05 – Power and Sample Size

Introduction to Biostatistics 05 – Power and Sample Size

This lecture explores the critical concepts of statistical power and sample size. It addresses the common "not significant" dilemma: does a lack of significance mean there truly is no treatment effect, or was the study simply too small to detect it? The session explains Type II errors (false negatives), how to calculate the power of various tests (T-test, ANOVA, Z-test), and how to determine the necessary number of subjects before starting an experiment to ensure reliable results.

Learning Objectives:

By the end of this lecture, students will be able to:

- Define Power as the probability of correctly rejecting a false null hypothesis or detecting a "true positive."

- Differentiate between Type I (Alpha) and Type II (Beta) errors, using the analogy of pregnancy tests to illustrate false positives and false negatives.

- Identify the factors that influence power, including alpha level, sample size, population variability, and the size of the treatment effect.

- Calculate the non-centrality parameter for T-tests and ANOVA to determine power using standardized charts.

- Determine required sample sizes for various study designs (comparing means, proportions, or relative risk) to achieve a desired power level (typically 80% or higher).

Topics covered in this lesson

- Reporting a p-value greater than 0.05 does not provide definitive proof that a treatment has no effect if the study lacks sufficient statistical power.

- Overlapping distributions visualize how a true treatment effect shifts the data away from the null hypothesis to reveal the likelihood of a true positive result.

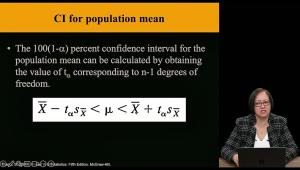

- The non-centrality parameter for a t-test is determined by the ratio of the difference to be detected over the standard deviation, adjusted for the sample size.

- Calculating power for ANOVA involves determining a specific non-centrality parameter based on the number of groups and the expected minimum difference between them.

- Specific procedures are used to calculate the power for categorical data, including the Z-test for proportions and contingency tables.

- Re-evaluating an anesthetic study on the cardiac index demonstrates how a 15% difference was likely missed because the study only had 11% power.

- A robust study design requires using pilot studies or existing literature to estimate the standard deviation and the expected effect size to calculate the required sample size.

- Power curves found in statistical appendices allow researchers to determine the necessary sample size based on numerator and denominator degrees of freedom.

External links